Why Developers Aren't Coming Back After Their First Session

If developers aren't coming back after session one, you're probably measuring the wrong thing. Discover why stage blindness keeps founders shipping features that don't move retention.

You've fixed the tutorial. You've cleaned up the docs. You've killed the bugs early users reported. Developers still aren't coming back.

If this is your situation, you're probably running the same troubleshooting loop most founders run: something about the product isn't clicking. So you improve the product. You add a better onboarding flow. You write a getting-started guide. You watch the metrics. Nothing changes.

Here's what I keep seeing across dev tool companies stuck in this loop: the diagnosis is wrong. First-session churn usually isn't a product problem. It's a stage problem. And if you're measuring product metrics to fix a stage problem, you're going to keep shipping features that don't move retention.

The Onboarding Loop That Goes Nowhere

Most founders respond to first-session churn by improving onboarding. This makes sense on the surface—developers aren't coming back, so something about their first experience isn't working, so you fix the first experience.

The problem is that "first experience" and "first session" aren't the same thing. The onboarding flow is one slice of the first session. But a developer's first session spans a journey that started before they ever opened your product—and continues through stages that have nothing to do with your tutorial.

When you only measure what happens inside your product during session one, you're measuring a fraction of what actually determines whether someone comes back. You can optimize that fraction indefinitely and never touch the part that matters.

I call this stage blindness: diagnosing developer churn with product metrics when the drop-off is happening at the journey level.

Where Developers Actually Exit

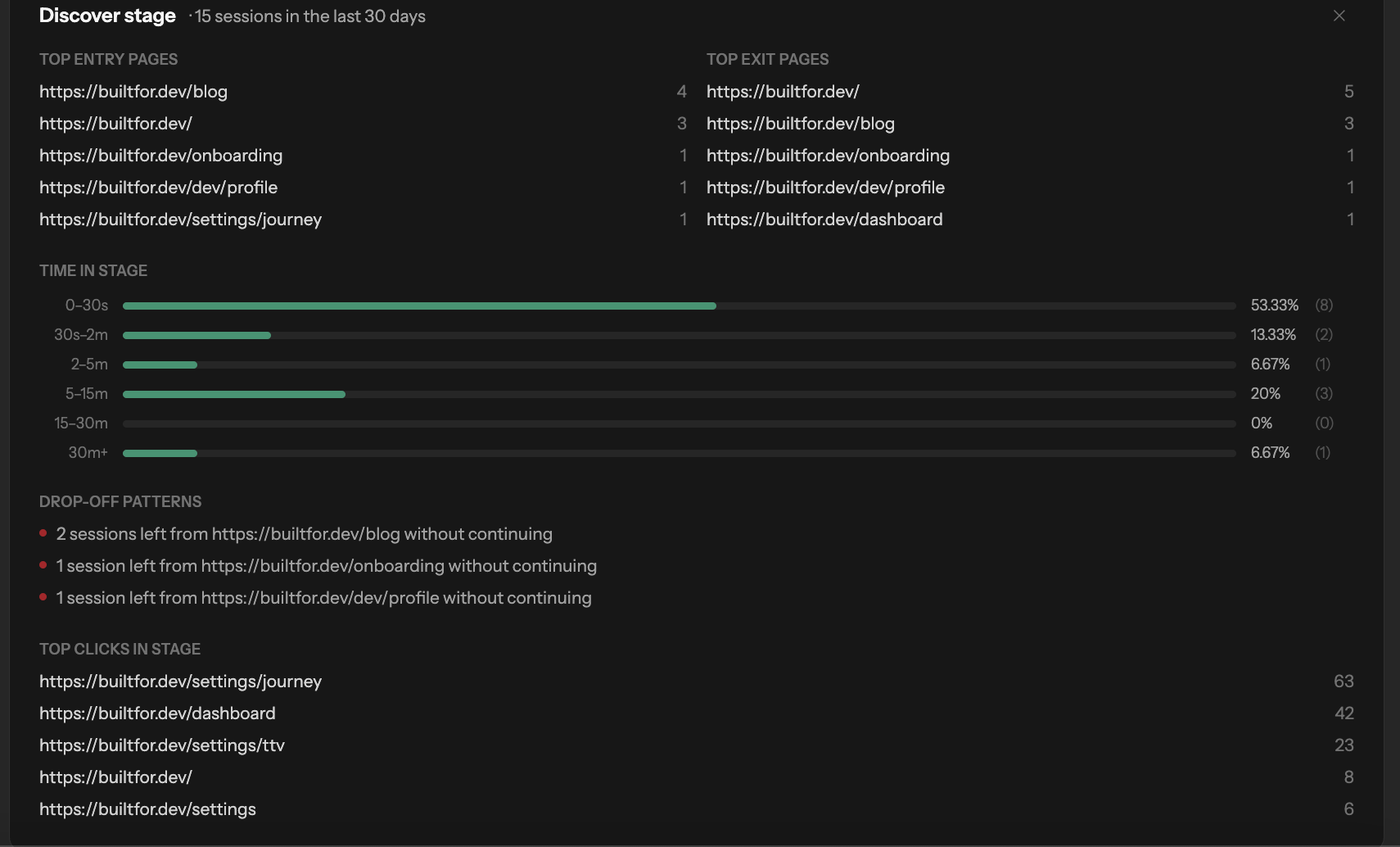

There's a framework I use to think about how developers move through any tool's ecosystem. Five stages: Discover, Evaluate, Learn, Build, Scale.

Most first-session exits happen in Evaluate and Learn. Not Build.

Discover is how they found you—search, a recommendation, a conference talk. If they're in your product, they've cleared Discover.

Evaluate is the decision stage. Before a developer commits to learning anything, they're asking a question you probably can't see on your dashboard: is this actually for me? They're scanning docs, reading changelog entries, checking the GitHub repo, looking at the community, reviewing pricing. They're trying to answer the question fast, before they invest real time. If your product doesn't give them a fast, clear answer, they're gone—and they look like a session-one exit, but the real exit happened during Evaluate.

Learn is where developers start building skills, not building product. They're running the quickstart. They're working through examples. They're hitting friction and deciding whether the friction is temporary (learning curve) or permanent (bad product fit). If the gap between your quickstart and real-world usage is too big, or if the docs assume knowledge they don't have, they exit here. Again, looks like session-one churn. Actually a stage failure.

Build is where most onboarding optimization is aimed. More tutorials, better error messages, cleaner UI. The problem: if developers are exiting in Evaluate and Learn, they never make it to Build. Improving Build doesn't help.

Scale is retention at depth—developers who've integrated your tool and are now spreading it to their team or expanding usage. You don't have a Scale problem if you're seeing first-session exits. But you won't get there without clearing the earlier stages.

What Stage Blindness Costs You

When you can't see which stage developers are exiting, you default to shipping. More features, more onboarding improvements, more docs. I've watched companies spend a quarter iterating on their Build experience when their real problem was an Evaluate stage that left developers unsure whether the tool was even meant for them.

The compounding cost: every iteration cycle you spend in the wrong stage is a cycle you're not spending on the right one. And with 4-6 months of runway, you don't have many cycles left.

The other cost is signal distortion. When you're measuring product engagement during session one and attributing poor retention to product quality, you're training yourself to look in the wrong place. The feedback loop reinforces the wrong diagnosis.

How to Diagnose Which Stage Is Failing

You can't improve what you can't see. The starting point is mapping your developer journey with enough specificity to identify where the drop-off actually happens.

Start with Evaluate. What does a developer experience between finding your product and deciding to invest time in it? What questions are they trying to answer? How fast can they answer them? If you don't know, your Evaluate stage might be invisible to you—which is exactly the problem.

Then look at Learn. What's the gap between your quickstart and a real use case? Where does that first "wait, this doesn't work the way I expected" moment happen? That's usually where Learn exits occur.

If you want a fast read on where your developer journey is failing, take the Developer Adoption Score at builtfor.dev/score. Free, about 5 minutes, and it gives you an actual score on your journey—not a generic 'improve your onboarding' recommendation. Want to go deeper? Our product offering tracks real developer behavior across every stage so you can see exactly where the drop-off happens.

The Real Question to Ask

The next time a developer doesn't come back after session one, the question isn't "what was wrong with their first experience?" The question is "which stage failed them?"

Those are different questions with different answers. And until you can ask the right one, the onboarding loop keeps going.

You've already tried fixing the product. That's not what's broken.